December 08, 2005

The Nexus of Guts and Glitz

Count on Martin Fowler, that technosexual trendsetter at the tony House of Thoughtworks to stir up the seasons most colorful and provocative design dustups Okay, Ill stop but here s the controversy

Martin has of late fallen under the spell of Ruby, a dynamic postmodern scripting language billed as a pragmatic amalgam of Smalltalk and Java and what-have-you. Smalltalks soul in Javas clothing, with a dash of Python. I have been hearing a lot of good things about Ruby.

Anyway, many proponents of Ruby, it would seem, espouse what they call Humane Interface design. A humane interface places a premium on programmer convenience, and may be quite a bit larger than the Minimal Interface necessary to expose an abstractions basic functionality. The humane approach provides a more ornate, more verbose vocabulary to the programmer, as opposed the smaller, more austere, more Spartan, ostensibly more simple minimalist interface. Joey deVilla offers a gonzo follow-up take on this controversy here.

Their poster child example is a method, "last" that returns the final element of a collection. In Ruby, (and Smalltalk, and ) a method to do just this is provided. Java interface designers are cast as the Spartans in this tale, providing only a basic getter. To retrieve the last element of a collection, something akin to a.get(a.size()-1) must be executed.

Is the Marie Antoinette vs. Wal-Mart? Loincloths? Baroque and rococo vs. Frank Lloyd Wright and the modernists?

At first blush, this would seem any easy call. My Smalltalk roots (among other things) are likely showing when I say I see no harm in a larger, more expressive vocabulary.

And there I might leave it, if this did not raise an even more interesting issue, which is: What if they are both right? How is that for equivocation?

Now the reader need not fear, for I still intend to cast my lot on the side of the humane, and not the stingy, though when the argument is cast in such terms, it is hard not to feel a tad manipulated, and after all, smaller can be simpler, I suppose... To be anti-humane is to be in favor of what? Euthanizing kittens?

Why can't we provide capable expressive, human-friendly abstraction that are themselves built around minimal cores?

The motivation for this is the need for what I call a Core or, better yet, a Nexus, that is a single, central, and yes, minimal subset of the public repertoire of an object through which all definitive, authoritative message traffic, both external and internal must pass.

In Smalltalk, programmers were traditionally rather casual about which methods were private, or fundamental, and which were written to ride atop this rock-bottom level vocabulary. Protocol organization helped to convey this to some degree, but such conventions as there were were frequently honored primarily in the breach.

Dont get me wrong. Im not arguing that these conventions be formalized and enforced (at least not yet; not here), rather, Im arguing that this kind of "once and only once" is a guarantee that programmers want to ensure.

Why? So we can wrap the one place that gets and sets things, for instance. I want to know, for example, that any getter on a collection bottoms out at a single, definitive call to something like get().

So, indulge me for a minute. One way to do this might be

Problem: you want to ensure that all references to an objects internal resources are made through a fixed, restricted set of methods.

Solution: refactor all the methods in this core, this nexus, into a separate component. Construct an entourage of wrappers (of various sorts) that employ the broader, more extensive vocabulary, and forward those in the minimal subset to this core; this nexus.

The use of a separate component guarantees that all the humane embellishments provided to the outside world must go through the minimal argot supplied by the core. This design also permits pluggable alternative implementations, such as, for instance, a sparse array implementation, to be plugged in in place of the standard cores.

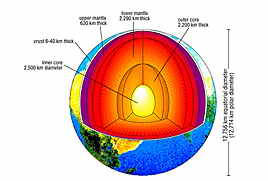

Such as design is layered in the strict, traditional sense that many so-called layered designs are not. Like an onion.

Alternative designs involving inheritance using a private core with protected accessors are possible where efficiency concerns are at a premium, and runtime plug-in substitution seems unnecessary. Schemes employing interface inheritance are possible to envision too.

This was the defacto solution to this problem seen in a number of vintage Smalltalk classes. Indeed, such internal layering has been suggested as a smell that indicates that a new component need be culled from a class via refactoring.

So you can have a simple guts, and flashy, interchangeable skins. You can almost have your cake and eat it too. Marie Antoinette would be pleased. The filigree, the utility methods, are in the Decorators, while the core, the essence, is in the decorated cores.

That there be a nexus can be thought of as a design principle, its embodiment in recurring designs makes it a candidate for patternhood. Lets see, we need two miracles for beatification, and two more for canonization

There is more that a hint of Handle / Body here, among other things. A scoop of Class Adapter? A full dress treatment of this seat-of-the-pants, inchoate proto-pattern notion might address these issues.

The piper is paid, as with so many patterns, at Creation Time, Provisioning Time, when the structural edifice that drives the computation is constructed, when the web of objects is woven. But that is a tale for another post...

Heres why Im partial to the somewhat obscure word nexus, over core, for this intent. While the more familiar core conveys the centrality, the primacy in this intent, it doesnt capture the dynamic, causal interconnectedness of this intent as precisely as does nexus. Ive been looking and listening for a long time for a term that better gets across the idea that a method or object be at a definitive, authoritative chokepoint or bottleneck, at a pass at which functionality could be headed-off, and this might be it. And no, I havent read very much by Henry Miller. From M-W:

nexus

Etymology: Latin, from nectere to bind

- CONNECTION, LINK; also, a causal link

- a connected group or series

- CENTER, FOCUS